Unofficial AI tools and browser extensions are converting helpful prompts into data leaks - employees routinely paste sensitive info into chatbots, unknowingly exposing it. This post explains how “Shadow AI” usage spills corporate secrets and offers concrete defenses like DLP rules, governance, and secure AI alternatives to plug those leaks.

Employees love the speed of AI assistants, but that convenience is creating a shadow data leak. Recent surveys and research show tens of millions of employee prompts being sent to consumer AI tools, often with sensitive content. For example, analysis of 22 million enterprise AI prompts found 579,113 sensitive data exposures, with just six popular AI apps accounting for 92.6% of those leaks.

This is important now because workers routinely copy proprietary information, PII, or credentials into chatbots and extensions outside IT’s view, essentially exfiltrating data every time. In this article, you’ll learn what “Shadow AI” is, how well-meaning prompts can leak data, and what practical steps can stop those leaks.

What It Is Shadow AI

Shadow AI is the use of unsanctioned AI tools by employees. It includes everything from off-the-shelf chatbots (ChatGPT, Bard, etc.) to browser extensions and code assistants not approved by IT. Key terms: a prompt is the text a user gives an AI model; LLM (Large Language Model) is the underlying generative AI; and data exfiltration is unauthorized data removal. In plain terms, whenever someone pastes work content into an unauthorized AI, that content could be transmitted and stored by the AI provider. Unlike shadow IT of the past, shadow AI tools consume data they keep, learn from, or even inadvertently expose that sensitive text.

Shadow AI is the use of unsanctioned AI tools by employees. It includes everything from off-the-shelf chatbots (ChatGPT, Bard, etc.) to browser extensions and code assistants not approved by IT. Key terms: a prompt is the text a user gives an AI model; LLM (Large Language Model) is the underlying generative AI; and data exfiltration is unauthorized data removal. In plain terms, whenever someone pastes work content into an unauthorized AI, that content could be transmitted and stored by the AI provider. Unlike shadow IT of the past, shadow AI tools consume data they keep, learn from, or even inadvertently expose that sensitive text.

How It Works

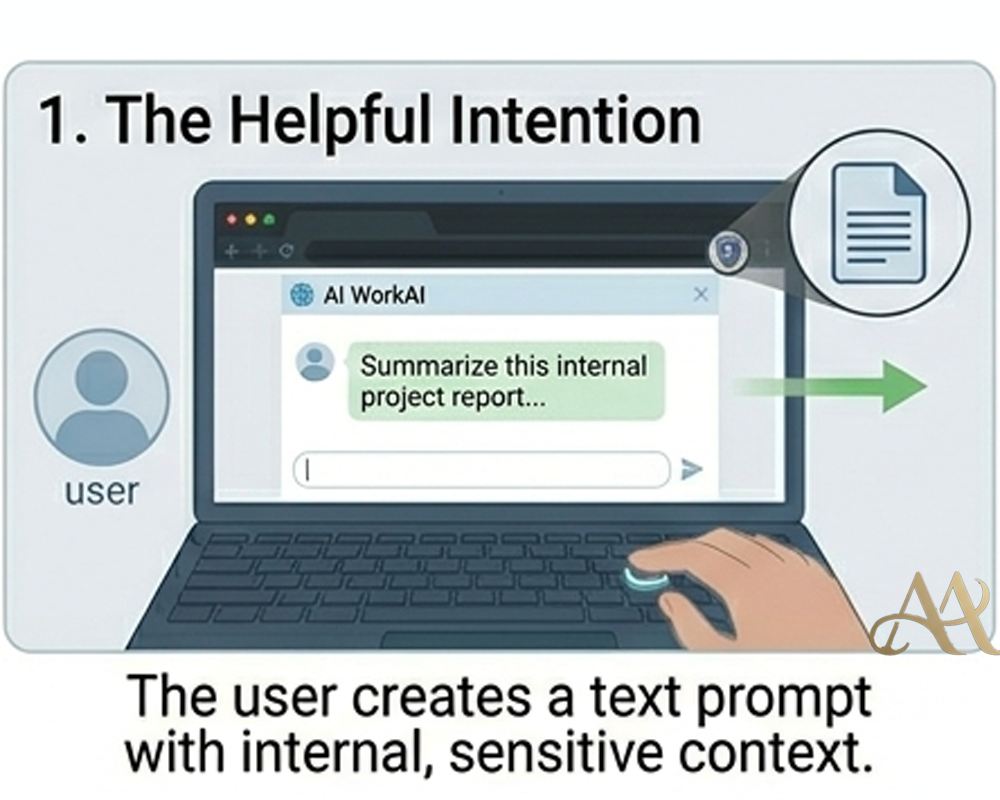

- User Prompt: An employee pastes data into an AI tool. This might be business plans, customer data, or even login credentials. For example, a developer might input proprietary code into a chatbot to debug it.

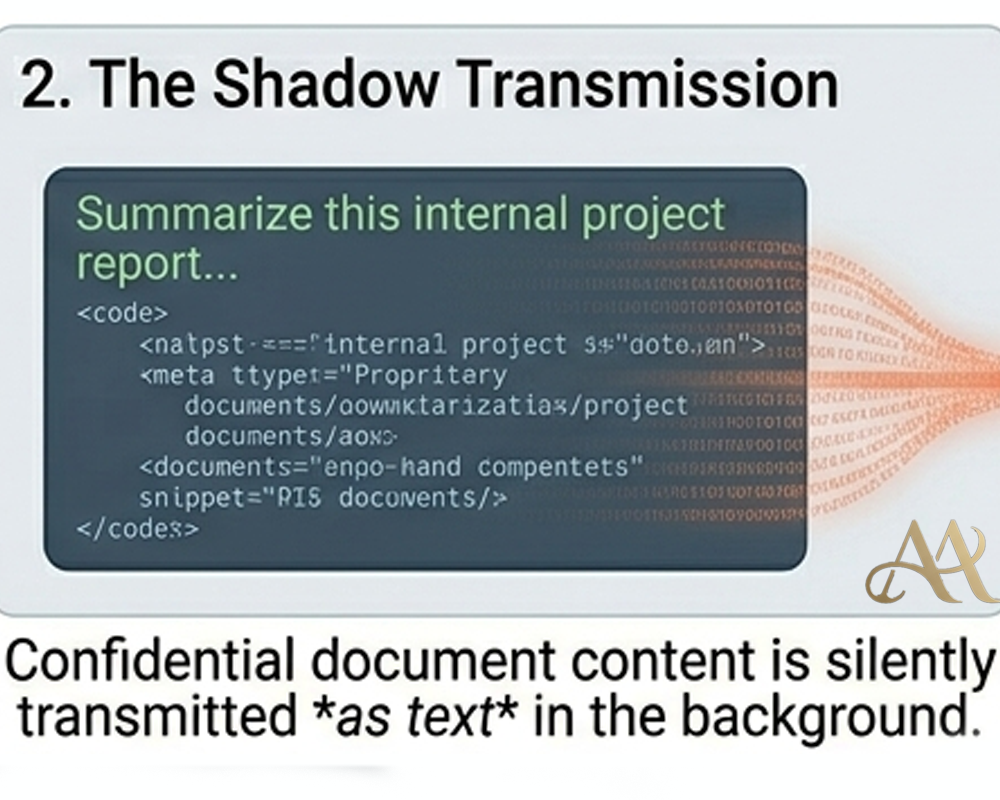

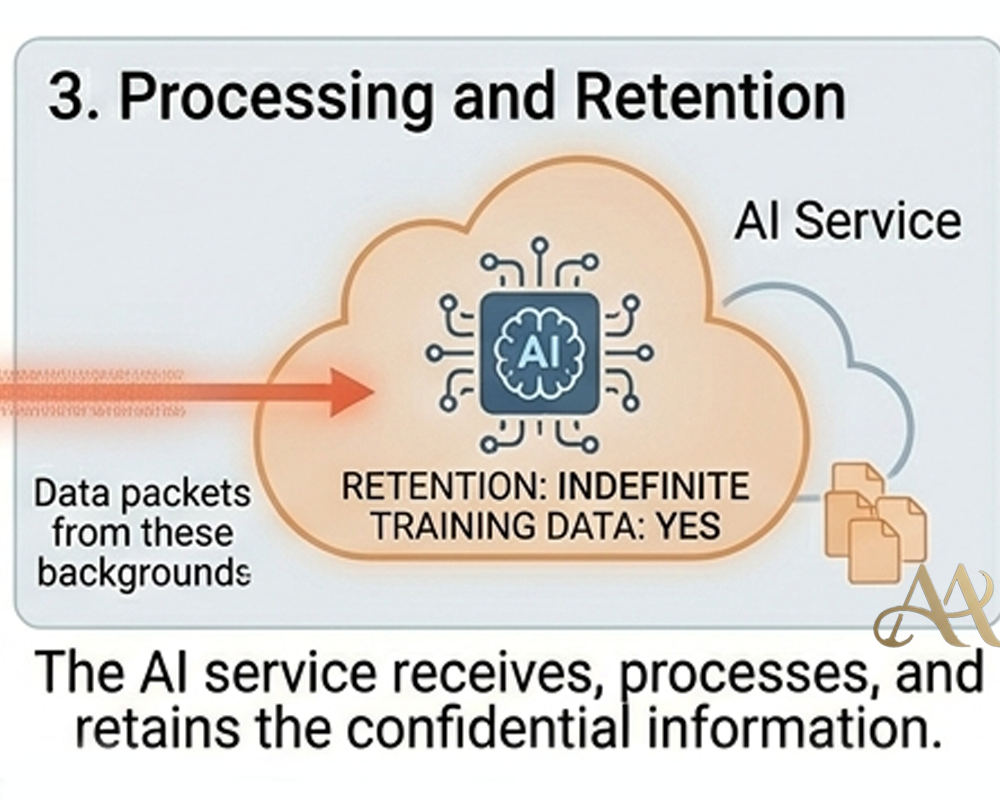

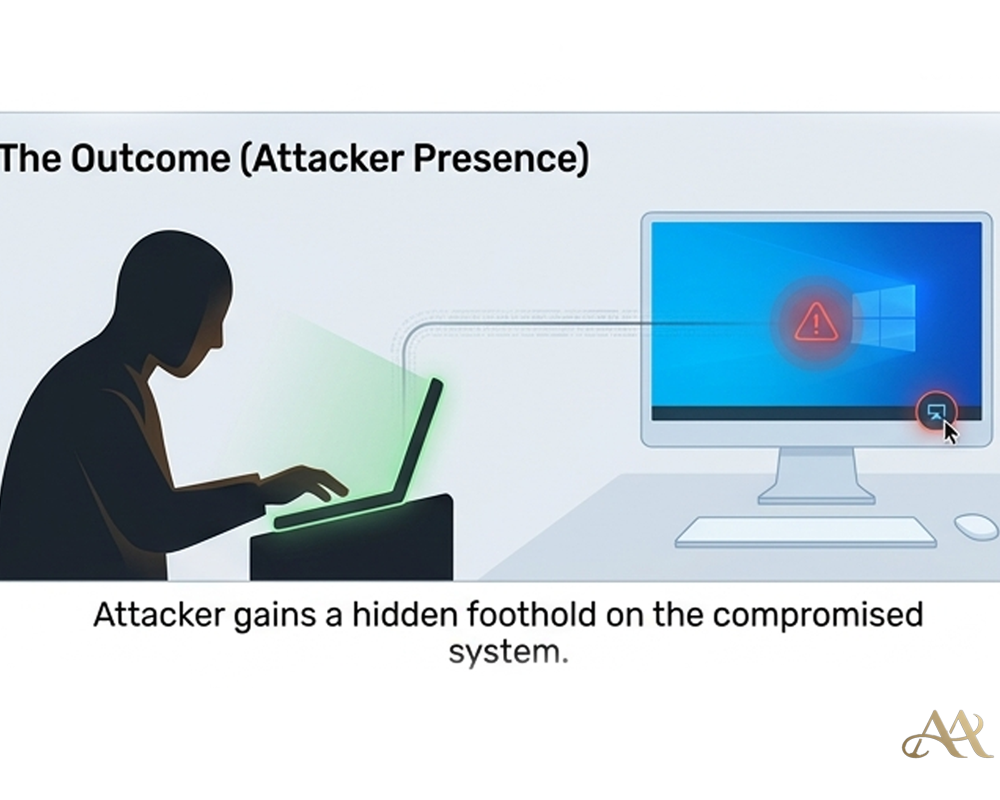

- Data Transmission: The AI tool receives the prompt over HTTPS and processes it. Because these tools run in the cloud, the user’s data leaves the corporate environment automatically. (In MITRE ATT&CK terms, this is akin to Exfiltration Over Web Service (T1071), only the “attacker” is the tool itself.

- Storage & Sharing: The AI provider may store the prompt and its response. If it’s a free or personal account, there is often no enterprise control or data retention policy. As BlackFog notes, fake AI browser extensions were found “sending complete AI chat histories containing proprietary source code, business strategies, and confidential research” to attackers. In other words, every helpful prompt became an unwanted data leak.

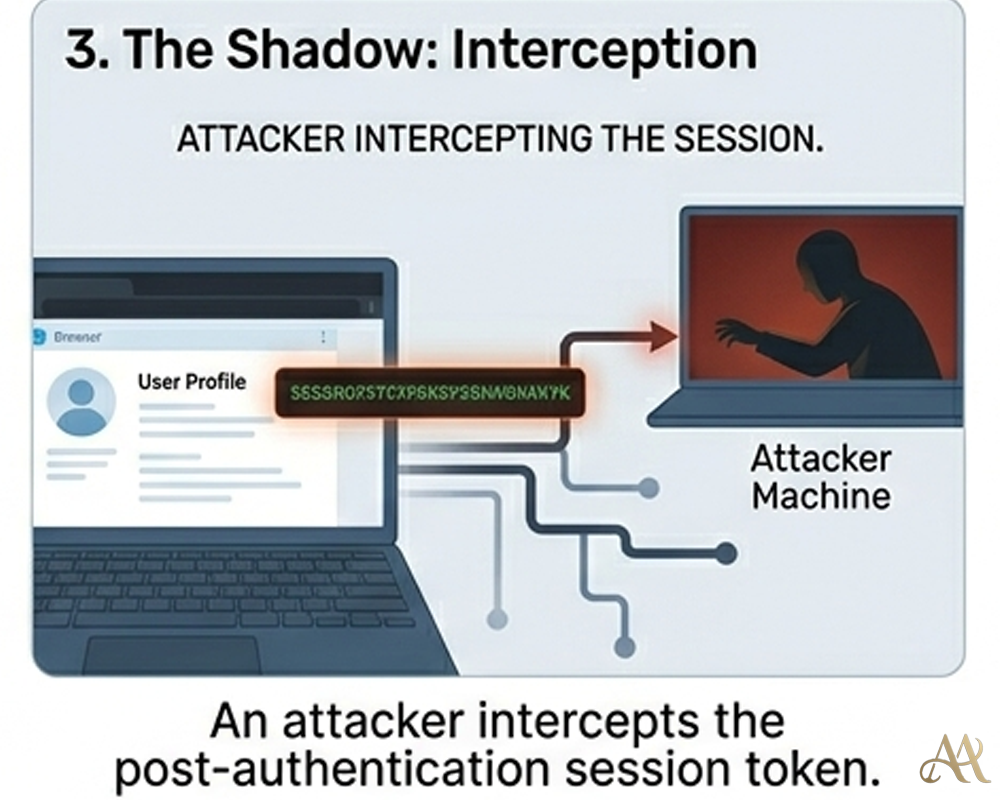

- Data Exposure: If the AI system is breached, or an insider at the AI company misuses data, the sensitive text can surface externally. Attackers can also scour public “training data” repositories. Even without an external breach, uncontrolled AI usage means that data is circulating beyond company security.

Real-World Impact

- Individuals: Personal data and credentials can leak. An employee who pastes their SSN into a chatbot risks identity theft if that data is not protected. On one survey, 27% of workers admitted sharing employee records or payroll info with unsanctioned AI Businesses: Proprietary information (source code, R&D, financial reports) often ends up in prompts. In BlackFog’s research, 33% of employees shared research data to free AI tools, 27% shared staff data, and 23% shared financials. With Secure reports 50% of user AI inputs contained corporate material. This can lead to IP theft and regulatory fines (e.g. GDPR) if that data leaks. IBM found that breaches involving AI misuse cost substantially more per incident.

- Systems & Infrastructure: Shadow AI bypasses logging and controls. Traffic to consumer AI sites is invisible to corporate SIEM. An attacker knowing an internal URL (stolen via a prompt) gains reconnaissance on company systems. Over time, widespread unauthorized AI use undermines governance and broadens the organization’s attack surface.

Common Mistakes or Misconceptions

- “It’s just a harmless chatbot.” Not true. Every prompt risks pushing sensitive data outside corporate boundaries.

- “Free AI is safe because it’s ‘useful.’” Free tools often lack security or data governance. Mimecast found 80% of organizations worry about AI data leaks, yet 60% have no plan to address them.

- “We can just ban it.” Blanket bans often fail (employees find workarounds) and ignore legitimate use. Instead, many experts advise safer alternatives.

- “Traditional DLP will catch this.” DLP focused on email/files may miss AI chat. Without specific monitoring of AI traffic, prompts slip by unnoticed.

- “Prompts aren’t data exfiltration.” They are. Prompting an AI is essentially transmitting data to an external service, just like emailing a file.

Practical Defensive Measures

Practical Defensive Measures

- Data Loss Prevention (DLP): Implement DLP rules that inspect clipboard or browser paste events for sensitive content before it’s sent out. For instance, block or flag prompts containing SSNs, credit card numbers, or confidential keywords. Modern DLP can even enforce “redaction” of confidential fields before data leaves the environment.

- Identity and Access Management (IAM): Enforce context-based policies. Only allow access to sensitive data within sanctioned apps. Use conditional access so that high-risk queries require strong authentication or fail. Require MFA for cloud accounts to mitigate compromised sessions.

- Logging and Monitoring: Enable logging of AI tool access (such as API calls, DNS queries to known AI domains, or browser extension installs). Send logs to the SIEM and alert on anomalies (e.g. unexpected data uploads to AI services). According to NCSC, you cannot fully prevent prompt-based data leaks, so focus on visibility and response.

- Least Privilege & Encryption: Minimize what data users can even retrieve. For example, use encrypted tokens or masked data in prompts. Don’t allow service accounts with broad data access to interface with unsanctioned AI.

- Governance & Training: Officially sanction and configure AI tools with privacy settings. Provide secure approved AI platforms (enterprise LLMs, on-premise models) as alternatives. Train staff: explain that corporate data should never be pasted into unvetted AI, just as they wouldn’t email it to a stranger.

Conclusion

Shadow AI isn’t science fiction, it’s happening now. Every time an employee pastes something into a chatbot, there’s a chance that helpful prompt becomes a data leak. The cost? Lost IP, compliance fines, and strategic disadvantages. Organizations must accept that AI usage is widespread and focus on managing data, not banning tools outright. By implementing DLP, careful policies, and employee awareness, security teams can let AI boost productivity without handing secrets to the outside world.

REFERENCE

- https://www.fbi.gov/contact-us/field-offices/sanfrancisco/news/fbi-warns-of-increasing-threat-of-cyber-criminals-utilizing-artificial-intelligence

- Puthal, Deepak & Mishra, Amit & Mohanty, Saraju & Longo, Antonella & Yeun, Chan. (2025). Shadow AI: Cyber Security Implications, Opportunities and Challenges in the Unseen Frontier. 10.13140/RG.2.2.19510.61760.

Cyber Hygiene

Cyber Hygiene  Attack Anatomy

Attack Anatomy  Digital Deception

Digital Deception

Leave a Reply